Changing Our Mind

- Jeff Hulett

- Apr 18, 2023

- 53 min read

Updated: Aug 5, 2023

Not making a decision is a decision.

Photo by: Michelle Pescosolido

“It is human nature to get anchored in our beliefs. The challenge is knowing when to drop anchor and sail away.”

Jeff Hulett is the Executive Vice President of the Definitive Companies, a decision sciences firm. Jeff's background includes data science and decision science. Jeff's experience includes leadership roles at consulting firms, including KPMG and IBM. Jeff also held leadership roles at financial services organizations such as Wells Fargo and Citibank. Jeff is a board member and rotating chair at James Madison University.

Changing our minds can be difficult. It seems even more challenging to convince someone else to change their mind. Beliefs tend to stir tribal emotions, like when our favorite football team wins or our least favorite political candidate wins. Our beliefs, while sometimes of mysterious origin, are foundational when evaluating a decision to change our mind. This article explores how we change our minds, especially as it impacts our personal and professional growth. We use a multi-disciplinary approach, considering research from Decision Science, Economics, Psychology, Neuroscience, and Mathematics. This article provides practical business and science contexts. We feature real-life examples of changing our minds and reaching our goals. We provide decision tools you will find helpful to make the best decisions! We provide a path to approach life in "Perpetual Beta." This is a mindset commitment to belief updating and self-improvement.

Table of contents

Background and Building An Understanding

Our Approach

Belief Inertia

Information Curation

Listening to our Gut

Active Listening and Living In It

Educated Intuition

Expanding our evidence bucket

Pulling the Trigger

Change is not a failure

Build your BATNA

Beware of sunk costs

Conclusion (sort of)

Notes and Musings

Resources

As decision complexity increases

A consulting firm example

A COVID-19 vaccine development example

An investment firm example

A decision-making smartphone app

These resources are provided as an explanatory aside. They appear periodically throughout the article.

Smartphone decision app

1. Background and Building An Understanding

First, why is it important to change our minds? The ancient Greek and Roman philosophers taught that changing our mind is associated with critical thinking and wisdom. For example, Socrates established the importance of asking deep questions that probe profoundly into thinking before we accept ideas as worthy of belief. Marcus Aurelius and other stoics taught the four tenets of Stoicism: wisdom, courage, justice, and moderation. Wisdom and the courage to take "learned action" are core to Stoic beliefs. The ancients did not teach "what" to believe, they taught "how" to think about, form, and change beliefs.

More recently, in some communities, changing our mind is considered a sign of weakness and lacking tribal commitment. People are naturally tribalistic [i] and some authority figures may use this for political advantage. Examples include suggesting that changing our mind is associated with being "soft," "wishy-washy," or a sign of not being a good leader. Also, with the growth of uncurated data in our current society, it is easy to lose confidence in the information we rely upon to make belief decisions. Sal Kahn is an educator, CEO, and founder of Kahn Academy, the global education platform. In a recent interview he said:

"I talked to the provost of two leading universities, and they both told me that there’s a secular decline in kids’ ability to be critical thinkers. So, many kids show up at campus already anchored in their points of view. They don’t know how to discourse. They don’t know how to look at data to potentially change their mind and that’s a real problem."

In summary, changing our mind is core to critical thinking and wisdom. In recent times, it seems changing our mind has become more challenging and sometimes politicized. A core thesis of this article is that our individual process for changing our mind is critically important to our personal and professional growth. It is also important to the health of our community and democracy.

For this article, I will inspect my own decision-making processes and include high-impact examples from my career. I will also offer the wise input of many others. To start, I change my mind over time. It is usually an evolving process, not a thunderbolt. I tend to deliberately build an understanding and actively consider changing my mind. A decision to change my mind may result after effectively weighing the evidence about a held belief. I generally have a growth mindset as it relates to belief updating. I consider beliefs as dynamic and available to change as new information becomes available. Generally, beliefs are formed from our experiences, whether from childhood, family, religion, education, professional, or other sources. In this article, we accept our initial belief set as a given. In our notes, we explore how culture and family upbringing impact our initial belief formation. [ii]

This article provides methods and context for how to update your beliefs and take action based on those updates. Eliezer Yudkowsky, a Decision Scientist and Artificial Intelligence theorist [iii], suggests:

"Every question of belief should flow from a question of anticipation."

To this end, we will explore changing our minds by first identifying our beliefs, then evaluating anticipatory evidence supporting, or not supporting, a belief. Finally, we will provide suggestions for acting upon our updated beliefs.

1a. Our Approach

The following is a straightforward approach to changing our mind. Imagine all the evidence you know about a belief could fill a bucket. The individual evidence is represented by rocks of different sizes and colors.

The rock color represents whether the evidence supports your belief or not (to build our mental picture: pro is green, con is red), and

the rock size represents the weight (or importance) of the evidence, either bigger or smaller than the other rocks.

The portion of the bucket filled with pro evidence acts like a belief probability.

So, if 70% of the evidence in the bucket supports our belief, then the belief may be sound, though there is some contrary evidence (30%).

“The best choices are the ones that have more pros than cons, not those that don’t have any cons at all”

-Ray Dalio - Chairman, Bridgewater Associates

So, what if new evidence is added to the bucket? If the new information is net pro (supporting) evidence, then the belief probability increases. Alternatively, if the new information is net con (contrary) evidence, then the belief probability decreases. For many people, reducing a belief probability is challenging. For some, it creates a mutinous feeling, like one is betraying their tribe. [iv] That is an unfortunate but natural feeling. Rationally updating a belief probability is a critical part of making the best decisions. In section 3, we provide an approach to help overcome the natural feelings keeping us from appropriately changing our mind.

If the supporting belief probability then approaches the 50% threshold, we should seriously consider changing our mind. This approach is meant to provide some structure and flexibility to update as we learn. At a minimum, we should subjectively rank order and compare the existing and newly weighted evidence. Please see our notes for a definition and examples of "evidence." We also discuss how evidence relates to our rock size and color. [v] In our “Other Musings” section we provide a brief description of Bayes’s Theorem and Bayesian Updating. The concepts of conditional probability are at the core of properly “changing our mind.” Understanding the related mathematics is helpful for a deeper understanding of our belief-updating approach. We also provide tools that handle the math in the background.

Side Note: As decision complexity increases

In the main, this article is based on “changing our mind” about a belief we encounter in our life experiences. In the decision science world - a “belief” is more generally known as a binary alternative decision outcome - like “I should or should not change my current job.” The different colors and sizes of rocks relate to decision criteria. The decision criteria are evaluated and weighed by assessing the related evidence. The weight of prior evidence provides the probabilistic belief baseline. New, posterior probabilistic evidence is evaluated and utilized to update the prior evidence and belief probability. This relates to the “Bayesian Updating” introduced in the last section.

Downstream decisions are often more complicated. After one changes their mind about a belief, it is often quickly followed by making up their mind among multiple choices. For example, if one changes their mind about their current job, the next step is finding and assessing multiple new job alternatives. These are decisions with multiple criteria and multiple decision alternatives. Whether evaluating a belief or choosing from multiple alternatives, it is helpful to create a criteria-based decision model using readily available decision science-enabled tools. The decision model may then be applied to each alternative to enable the best decision. Please see the notes section for a decision process narrative example and related decision tools to make the best college decision. [vi]

When multiple criteria are present (metaphorically, different colors and sizes of rocks) it is very challenging for us to assess the weights (rock size) accurately. Our brains have a natural tendency to inappropriately lump criteria together as a single aggregate weight. The decision science tools will help independently separate and appropriately weigh the criteria. Also, the tools help you track the criteria and apply them to the alternatives. [vii]

In the next section, we continue to unpack our “changing our mind”-based decisions. We will explore naturally occurring biases known as "belief inertia.” Belief inertia creates evidence evaluation and decision challenges.

1b. Belief Inertia

Robyn Dawes (1936-2010) was a psychology researcher and professor. He formerly taught and researched at the University of Oregon and Carnegie Mellon University. Dr. Dawes said [viii]:

"(One should have) a healthy skepticism about 'learning from experience.' In fact, what we often must do is to learn how to avoid learning from experience."

This article's thesis is that, while skepticism is useful, we can not help but learn from experience. When reasoning, we should do our best to understand and correct the biases that may impact our "changing our mind" perspectives. Dawes goes on to say:

“…making judgments on the basis of one’s experience is perfectly reasonable, and essential to our survival.”

This section explores key biases and how they impact our evidence bucket.

A higher quantity of existing evidence (a bigger bucket) may lead to a kind of “belief inertia.” This occurs because a bigger evidence bucket requires more new evidence to move the needle on the existing belief perspective. Belief inertia may be a sign you have “more to lose.” Importantly, “new evidence” may include error-correcting the existing weight of evidence. This is updating evidence you may have previously accepted. Also, a larger evidence bucket may seem overwhelming. We may be more likely to take mental shortcuts subject to bias. Rationally, having more to lose should not change our ability to change our mind. If anything, a larger belief bucket should increase the evidence available for updating our belief bucket. This occurs because the higher volume of evidence may need error-correction updating in a larger belief bucket as compared to a smaller belief bucket. However, there is significant research showing that people's belief inertia may distort or inappropriately impact how we change our mind.

There is a truism, sometimes attributed to Julius Caesar, that people “Believe what they want to believe.” This happens because certain evidence tends to “harden” as a result of belief inertia. To dig deeper, please see our 6/6/22 “Additional Musings” entry for thoughts from author Leo Tolstoy and the great challenge to update our beliefs.

Hardened evidence makes it more challenging to appropriately evaluate evidence. (I.e., update the rock size or color) Biased or incomplete evidence is likely to result in an evaluation error. These sorts of errors give rise to biased evidence, inaccurate decisions, and bad outcomes. For example, hardened evidence leading to belief inertia may be caused by fear, overconfidence, or expertise:

Belief inertia and fear: In my life, wealth relates to overcoming belief inertia. As I have aged and increased my wealth base, it makes it easier to make change decisions. I feel less anxious knowing I have a financial buffer in the event things do not work out as planned. I have found it easier to overcome belief inertia with a buffer. My wife and I started with very little financial wealth. I was the primary breadwinner and we had four young children. Early on, the belief inertia resulting from fear of change was more powerful. An important lesson I learned from our "low wealth" years is to let go of fear because things usually did work out. Not always as planned, but things do tend to work out. For most people, fear is a temporary state of mind. Our current state of mind anchors our perception of utility. "Utility" as discussed in this article, means the benefits received by satisfying our preferences when making a decision, net of the risks incurred by the decision. Thus, managing our anchors is critical when evaluating the expected utility of any decision. In this case, good decision-making occurs by draining the fear and waiting to be in a “fear-neutral” anchored state of mind. Fear signals us that there may be a risk. But fear is a very blunt signaling instrument. The problem is, fear has no nuance, it does not evaluate the actual likelihood or severity of the risk. The idea is to let go of the emotion, but keep the probabilistic information value potentially signaled by fear. This will help to evaluate decision utility by properly netting risk from the benefit. To dig deeper, please see our notes describing how belief inertia-related uncertainty and job change unwillingness impacts personal finance. [ix]

Belief inertia and overconfidence: Julia Shvets is an economist at Christ's College in Cambridge, England. In a study, Shvets and colleagues found that only about 35% of managers were accurate in self-assessing their future performance as compared to their actual current work performance. Even though these managers had recently been provided evidence (i.e., a performance review) about their most recent performance. Also, these managers were biased toward being overconfident about their future performance. Shvets' study is consistent with research by Tversky and Griffin. They show that overconfidence can be found when the bright red or bright green probability scale (extreme evidence strength at or near 0% or 100%) is combined with small rocks (small evidence weight). In the case of a job performance self-assessment, the individuals tended to overweight the smaller weighted evidence when combined with more positive evidence strength. The overconfidence bias is that people naturally misinterpret smaller bright green rocks as bigger than they really are. To dig deeper, please see the notes for our Belief Inertia and Confidence Bias framework. [x]

Belief inertia and expertise: In some situations, expertise may lead to confirmation bias. We will discuss confirmation bias more thoroughly in the next sections. Francis Fukuyama is an author and political scientist at Stanford University. In a 2022 interview, Fukuyama recalled a situation when he was an official in the U.S. State Department. This is an example of when our own expertise, or belief inertia, is combined with a perception of others' lack of expertise. The outcome may lead people to not change their minds when they should have:

"In May of 1989, after there had been this turmoil in Hungary and Poland, I drafted a memo to my boss, Dennis Ross, who was the director of the office that sent it on to Jim Baker, who was the Secretary of State, saying we ought to start thinking about German unification because it didn’t make sense to me that you could have all this turmoil right around East Germany and East Germany not being affected. The German experts in the State Department went ballistic at this. You know, they said, “This is never going to happen.” And this was said at the end of October. The Berlin wall fell on November 11th. And so I think that the people that were the closest to this situation — you know, I was not a German expert at all, but it just seemed to me logical. But I think it’s true that if you are an expert, you really do have a big investment in seeing the world in a certain way, whereas if you’re an amateur like me you can say whatever you think." [xi]

Dr. Fukuyama brought a thoughtful, yet the nonexpert-based approach to forecasting the fall of the Berlin Wall. Philip Tetlock suggests this approach is a surprising advantage for the best forecasters. [xii] Tetlock uses the block-balancing game Jenga as a useful metaphor. Jenga is a game where a bunch of similarly shaped blocks is stacked in a rectangular cube. The game objective is to pull out the blocks without the cube falling. A cube removed from the top of the stack is easy, as it has little resting upon it. A block taken from the bottom of the cube is more challenging, as much of the cube is resting upon it. In this case, the Berlin policy experts were like the bottom cubes, whereas Dr. Fukukuyama was free to remove cubes from the top, as he was less invested than a paid policy expert.

The following framework maps the zones of underconfidence or overconfidence. The zones are based on the different belief inertia types previously discussed. Notice the “probability scale” for the strength of belief is turned on its side. The gray space is where we tend to be most accurate. The blue spaces are the areas where we naturally tend to bias (under or over) our assessments.

Bias occurs in many contexts. For example, car side-view mirrors provide a safety signal that danger, like another car, may be close. The mirrors are typically convex to maximize the signal field of vision. However, the convexity creates a visual bias. Many cars are required to have the following safety notice on their side-view mirrors: “Objects in the mirror are closer than they appear.” This is because the convex nature of the mirror makes the actual world appear differently than it really is. The same can be said for belief inertia. Our brains may create distortion based on how situations are viewed.

In the notes section, we discuss the Confidence Bias & Belief Inertia framework in more detail. [x]. Recognizing in what circumstances you may inappropriately assess your rock evidence is key to appropriate belief updating. The blue areas are like car side-view mirrors, except our brains do not have any warning notices!

When it comes to handling belief inertia, the great British mathematician and philosopher Bertrand Russell’s timeless aphorism is on point. Russell provides an appropriate reminder that good decision-making arises from uncertainty:

“The whole problem with the world is that fools and fanatics are always so certain of themselves, and the wiser people so full of doubts.”

- Bertrand Russell, 1872 -1970

Russell is suggesting wise people appreciate the probabilistic nuances of the size and color of our “rock” evidence. He suggests it is only fools and fanatics that simplify decisions as “all green!” or “all red!”

Our own brains tend to work against us when it comes to changing our minds. This may be expressed as the false dichotomy logical fallacy, familiarity bias, or confirmation bias. Please see our notes for a deeper dive into a) the difference between and b) the types of cognitive biases and logical fallacies. [xiii] As an example, I do my best not to fall into the false dichotomy trap. (I.e., the black or white “thinking fast” beliefs our brain favors for processing convenience.) So, if the existing belief probability is closer to 50%, I tend to be skeptical and seek new or updated evidence to challenge a held belief. I am less skeptical of beliefs that are more probabilistically certain (either closer to 0% or 100%). But I will say, I do like to challenge my substantive beliefs, no matter the existing probability. I mostly appreciate new and curated information (or “believable” information). Even if it doesn’t change my mind, I will certainly add it to the bucket, evaluate it as a pro or con, and reformulate the overall belief probability. My favorite outcome is when new information does change my mind. My favorite outcome feeling may start as tribal-based apprehension, but I have learned to appreciate it as liberation!

Another way to look at this approach is... we should diligently be on the lookout for 2 types of reasoning errors:

errors of commission -> misunderstanding of evaluated evidence. This is known as a false positive.

- or -

errors of omission -> evidence overlooked and not evaluated. This is also known as a false negative.

False negatives and false positives are also potential outcomes in a clinical testing setting. For example, a cancer test that initially results in a positive cancer indication and then later

is confirmed that no cancer exists is known as a “false positive.” Our own brains are subject to related reasoning errors. How our brains create mental models is based on how information is perceived or evaluated. Information subject to belief inertia is more likely to cause a reasoning error. As shown in the previous graphic, the speed by which we accurately and precisely transition from point 1 to point 2 is an indication of how good we are at changing our mind. A biased outcome is when our belief inertia causes us not to achieve the best change outcome as shown in point 2. Without an intentional decision process, positive change is often delayed or never achieved.

This “changing our mind” evaluation approach is very helpful for challenging, updating, or confirming held beliefs. How quickly beliefs are challenged is usually more of a time prioritization issue. [xiv] That is, if we are time-pressed, we may not be as available when new information presents itself. In this case, we should endeavor to “tuck it away” for future evaluation. There is a body of research suggesting that time-pressed people, especially chronically time-pressed people, struggle with decision-making. This is beyond the scope of this article. Please see the notes for an excellent resource from University of Chicago Computational and Behavioral Science professor Sendhil Mullainathan. Mullainathan provides research on scarcity, including time scarcity. [xv] The important takeaway is to beware of time scarcity and its impact on your ability to both collect and evaluate evidence for your decision bucket.

1c. Information Curation

In recent decades, changing sources and availability of data have caused challenges to the acquisition of the curated information needed for quality decision-making. There was a time when we received balanced information via broadcast media networks. In the current age, social media-based narrowcasting has segmented our media information to deliver potentially biased and incorrect data. Changing our mind is particularly challenging if we lose confidence in our data sources or only consume narrowly presented viewpoints. Our evidence bucket, unattended, may be incomplete, biased, or wrong. In the words of sociologist and University of North Carolina professor Zeynep Tufecki:

"The most effective forms of censorship today involve meddling with trust and attention, not muzzling speech itself."

Narrowcasting enables confirmation bias and errors of omission. It is almost impossible to accurately update a belief if the evidence used for updating is one-sided. It is like only allowing green rocks to be dropped into your belief bucket and purposefully keeping out valid red rocks. Narrowcasting enables the antithesis of curiosity.

The good news is that we can all be good curators of information to fill our belief bucket. [xvi]

Think of your data sources as being part of your very fertile information garden. It is a garden holding your unbiased, high-quality information sources but also may harbor unwanted data weeds or invasive data plants. Your job as the master gardener is to constantly weed your information garden and introduce new, high-quality information plants. Also, every good garden has a variety. Being open to diverse and curated information species is the key to a healthy garden and appropriately updating your evidence bucket.

Curated information species likely have a modest cost. Just like anything else of value, those responsible for providing high-quality, unbiased information should be paid ... like how high-quality food servers receive tips. The dark side of not paying for your information is the increased likelihood of weedy, biased, low-quality data. In the documentary "The Social Dilemma," former Google design ethicist Tristan Harris mentioned:

"If you are not paying for the product, you are the product."

The idea is -- in order to NOT be a tool for those purveying biased or incorrect data, it is worth a modest price.

Keeping in mind, that unbiased, high-quality information that causes you to decrease your belief probability may initially feel weedy. In our notes, we provide a reference for digging deeper into information curation, including the timeless perspective of former U.S. president Theodore Roosevelt. [xvii]

Matthew Jackson, an economist at Stanford University provides an aligned perspective. In a recent interview [xviii], he said:

“People tend to associate with other people who are very similar to themselves. So we end up talking to people most of the time who have very similar past experiences and similar views of the world, and we tend to underestimate that. People don’t realize how isolated their world is.”

A consulting firm example

I formerly worked for KPMG and I worked there for 10 years, from 2009 to 2019. KPMG is a “Big 4” Audit, Tax, and Advisory firm. I was a Managing Director. My decision to leave KPMG went something like this:

First, to understand why I left, we must start with my reason for being there in the first place. My overall mission (or “why” statement) for being at KPMG was twofold.

To help financial services clients, and,

to help junior team members develop and progress in their careers.

We were incredibly successful. I am proud of my teams for:

Helping many banks and millions of their loan customers through the financial crisis,

Standing up an industry Artificial Intelligence enabled technology and operating platform to improve loan quality and operational productivity, and

Growing a strong university recruiting and development program.

I would periodically compare my ability to execute against these mission statements. When it came time to leave, the decision was based on my analysis that KPMG and I were prospectively no longer aligned enough with MY ability to execute against the mission. (think probabilistically - anticipated forward alignment < 50%) Keep in mind that KPMG is an excellent firm. But ultimately, I had evolved and anticipated needing a different environment to meet mission goals.

1d. Listening to our Gut

At this point in the article, it would seem typical decisions are made from the evidence that has known information value or “language”….this is certainly NOT true! For the remainder of this section and in section 2, we will make use of neuroscience and context from a resource called Our Brain Model. This model provides background on how the brain operates to make decisions. The model shows how evidence with language is processed differently than evidence from our gut. Also, it will help you understand the source of cognitive biases and related brain operations that may cause less-than-ideal decision outcomes.

Separate from language-based evidence, we also tend to listen to our gut. Our gut has no left hemisphere-generated language and manifests itself as right hemisphere-based feeling or intuition. That is, we are not exactly sure why our gut believes something….It just does and we just do. Per our brain model, we are referring to the difference between the high-emotion & low-language pathway, as opposed to the low-emotion & high-language pathway.

Please see Our Brain Model for the pathway overlays.

As the model suggests, it is our left hemisphere that houses our language. Nobel laureate Daniel Kahneman discusses in Thinking, Fast and Slow, our “fast,” emotion-based thinking will bypass our left hemisphere. The left hemisphere is on the low-emotion & high-language pathway. Without left hemisphere processing, it is difficult to add language to evidence. To be clear, these mental pathways are highly dynamic and interactive. People process along both these mental pathways all the time. These pathways describe two major mental processing segments that impact changing our minds.

First, you may already anticipate the problem with this gut-based approach. If some of the evidence is held in our low-language processing centers, how do we evaluate it to build our probability understanding? Good question!...As it is difficult to evaluate a problem if your experience and learning provide little language! This is where listening to your gut and seeking to build language comes into play. I call it “living in it”. [xix]

It may mean listening to and learning from believable [xx] others that have already successfully “languagized” a perspective. Or,

Sometimes, it may mean just going with it, doing your best, and adapting as you learn.

The next section discusses integrating our gut into the decision-making process and changing our minds.

2. Active Listening and Living In It

In this section, we explore how the different parts of our brain interact to make decisions. We will explore "education intuition" as an approach that uses both the low-emotion & high-language as well as the high-emotion & low-language mental pathways introduced in the last section. We will then discuss how our brain naturally increases knowledge and effectiveness via a natural feedback loop.

2a. Educated Intuition

Related to changing our minds, University of Chicago economist Steve Levitt interviewed scientist Moncef Slaoui. [xxi] Dr. Slaoui ran the U.S. Government’s Operation Warp Speed, which started in 2020, to develop COVID-19 vaccines. Extraordinary speed was required to build the new vaccine. The result was incredible. Operation Warp Speed was a tremendous success. Future historians will likely consider it one of mankind’s outstanding achievements. This environment was a great example of quickly building a knowledge base with gut, curated information, and objectively informed judgment. From our brain model framework, the COVID-19 vaccine developers had to bring and build evidence from both their high-emotion & low-language pathways and the low-emotion & high-language pathways. Dr. Slaoui discusses Operation Warp Speed’s environment as necessitating a combination of pathways. He calls this “educated intuition.” The following interview exchange is particularly relevant:

A COVID-19 vaccine development example

Levitt:

“I'm really curious if you have any advice as someone who obviously thinks logically and scientifically about how to change the minds of people who don't think logically and scientifically. When you run into people who are ideological or anti-science or conspiracy believers, do you have strategies for being persuasive?”

Slaoui:

“That's a very good question. I would say my first advice, which is slightly tangential to what you said, I always say I'm not going to spend a lot of my time describing the problem. I quite quickly, once I have somewhat described the problem, I need to start thinking about solutions because that's how you move forward. Otherwise, if you continue describing the problem, you stay still. I use somewhat of a similar strategy of building up an argument and engagement where people understand that I'm genuine and authentic and I'm not at any cost trying to change their mind.

I'm actually mostly trying to understand how they think and start from there, because in order to really convince somebody of something, you need to truly exchange views, which means active listening and understanding why they say something. Some people it's impossible, but otherwise that would be my starting point. And my advice is:

Active listening, and

Once you have some grasp of the problem, think of solutions that also create energy and momentum to move forward.”

Specific to the weight of evidence model, Dr. Slaoui's comments suggest our perspective is a combination of right and left-hemisphere-based pathways as mentioned in the last section. That is, some weight of evidence stimulates the high-emotion & low-language pathway and some stimulates the low-emotion & high-language pathway. Active listening is an approach to help the conversee process and reveal the basis for their thinking. It will also help the conversant to understand what drives the other’s view and the degree to which they may change their mind in the short term. Please see our notes for a deeper dive into career decisions, including our brain's interactions when learning to like skills, tasks, and missions. [xxii]

2b. Expanding our evidence bucket

Expanding or growing our understanding is part of the brain's naturally occurring self-reinforcing systemic cognition process. It starts with learning, generally via practicing and doing tasks. Improvement and mental efficiency then occur as tasks are habituated. Improvement generally leads to positive feedback from those benefitting from the task execution. Positive feedback stimulates reward impulses via dopamine, a naturally occurring neurotransmitter. This dopamine-based emotion information tag encourages more practice... and the loop continues. Please see the “Expanding our evidence bucket” graphic. As poet Sarah Kays’ aphorism goes: “Practice does not make perfect, practice makes permanent.” Grace Lindsay is a computational neuroscientist. Dr. Lindsay said:

"Dopamine – which encodes the error signal needed for updating values - is thus also required for the physical changes needed for updating that occur at the synapse. In this way, dopamine truly does act as a lubricant for learning." [xxiii]

Also, Robyn Dawes, who was introduced earlier, calls this positive feedback process "reciprocal causation." An important takeaway is to be intentional about building your habits... your brain is listening!

Steven Sloman, a Brown University psychology professor, suggests having someone OBJECTIVELY explain how something works, instead of providing potentially FEELING-based reasoning for why they should or should not do that something, is an effective “changing our mind” strategy. That is, Sloman’s approach is a good way to help people both a) reveal their true (often lack of) understanding of a problem and b) anchor them in a productive mindset to be open to changing their minds. Also, Sloman suggests that we are part of a community-based hive mind. That is, we do not think alone. [xxiv] As such, understanding how others impact our thinking is critical to our own thinking understanding. Back to our Brain Model, it is the Right Hemisphere that actively seeks to connect with the outside world. We are genetically wired to be part of a wider network of thinking.

The hypothesis is that those with a high proportion of high-emotion & low-language evidence in their evidence bucket will take longer to change their mind. This hypothesis occurs because they may lack the internal understanding to evaluate the “how they feel'' evidence with language and critical thought. Said another way, people with beliefs generated more from emotion (and less generated from objective information) are likely to be slower to change their minds. It generally requires language for change-enabling reflection and evaluation. [xxv]

Practically, significant decisions almost always require judgment that may tap into your lower language evidence. In the decision science world, these are known as evidence with complex characteristics. The decision science tool suggested in this article is helpful when evaluating complex evidence.

To some degree, those with higher education as it relates to the topic will be more immediately open to adapting to a quickly changing environment. Even if the topical education is related but not always specific, they are likely able to frame and structure the problem, ask good questions, and fill holes in understanding. This enables moving forward and creating solution momentum. Per the vaccine development example, Dr. Slaoui called this “educated intuition.” For Operation Warp Speed to be successful, he needed teammates possessing the relevant language to quickly understand the problem and move to a solution. In other words, Dr. Slaoui needed senior teammates that could quickly contextualize the problem and differentiate between “what they know” and “what they don’t know.” Clearly, there was much they did not know. Notably, the COVID-19 virus was new and the required speed-to-market was new. Those with lower contextual-based education may be slower to adapt when a higher proportion of understanding is found in lower language brain centers.

To be clear, “education” as used here, is in the broad sense. That is, education may arrive from formal education, experience, practice, self-teaching, or some combination thereof. It is not necessarily related to formal or credentialed education. Regardless of the source of education, building language by accessing the left hemisphere is helpful when building your total evidence bucket.

Also, living in it or building solutions is a great way to clarify decisions and add to your total evidence bucket. That is, do not let yourself get bogged down by not acting because you want more information. After gathering initial evidence, often “doing” is the best way to collect more information and then error-correcting along the way. When possible, attempt to road-test your initial evidence-based decision. To dig deeper, please see the notes for additional resources. We provide an article describing the natural interaction between our induction-based reasoning (trying new stuff) and deduction-based reasoning (learning about new stuff.) The additional resources provide examples from Alexander Hamilton, Charles Darwin, Thomas Hobbes, and others. [xxvi]

Next is an example of a low-risk road test that provided high-value information.

An investment firm example

In 2019, I took a flyer by agreeing to be the Chief Operating Officer of an investment management firm. They had a very interesting blockchain technology I thought would be a good fit for a number of banking contexts. I certainly researched the industry and the firm’s leadership team, but ultimately I decided the only way to really learn was by doing. I persistently worked to build firm capability, learned a different business, developed client opportunities, networked, attended conferences, etc. Ultimately, the owner was unable to make payroll. I immediately left the firm.

Was I disappointed? A little. But mostly, other than my time, I took a little risk and learned a ton about something new and interesting. I’m grateful for the experience and also grateful the experience only cost a little time.

“I know every gain must have a loss. So pray that our loss is nothing but time.”

- The Mills Brothers, the song "Till Then"

Our laws and religions may codify evidence to provide certainty. Also, many have certain personal standards they adhere to.

For example, most religions support the golden rule - “Treat others as you want to be treated.” Laws generally make it illegal to assault or kill someone.

The point is, when it comes to changing your mind, there are certain understandings bounded by cultural or personal weight of evidence certainty. This generally makes decision-making easier as it reduces the need to weigh certain evidence. [xxvii] For personal standards, it is helpful to be thoughtful and reflective, recognizing that increasing entropy is the only physics-based certainty in life. [xxviii] Certainly, some have very structured personal standards. As such, understanding your and others' “non-negotiables” is important to building and weighing your evidence bucket. It is also enlightening to periodically test your non-negotiables. Some may evolve to become part of your probabilistic-based beliefs. Being on the lookout for improperly “hardened” evidence is a best practice. It is interesting that some people, particularly younger folks, sometimes respond to evidence-related questions with an enthusiastic "Definitely!" or "100%!" As this article suggests, wisdom comes from realizing a proper response may take more evaluation and will likely lead to a more nuanced, evidence-weighing response.

3. Pulling the trigger

We have discussed some finer points for building and evaluating our belief bucket, including the importance of curating information and how to use objective information and your gut. So, let’s say the scale tips, your supporting belief probability drops below the 50% threshold, and you are faced with a change opportunity, then what? It’s time to change your mind and take action! The reality is that most people hold on longer than they should. Changing your mind includes messy emotions and habits that intrude upon the decision-making process but do not always add value to the decision. An all too common cultural teaching is that "change is a failure." In this section, we provide tools to help you overcome our cultural fallacies and leverage our natural mental operations to make the best change decisions.

In the consulting firm example, the decision to leave was challenging. There had been much success. Many great working relationships had been developed. There was comfort in knowing how to work the organizational system. Existing sales pipelines and business relationships had been built. There was some fear of going from the known to the unknown. In summary, there were many excuses not to make a change. I do not regret it for a moment.

As a bit of advice, please consider these sorts of decisions like an economist. Consider anticipatory marginal value - that is, the "bucket" value created incrementally and likely in the future. In the case of a job change, the value is based on your or your organization’s comparative advantages. The following questions help evaluate comparative advantage:

How do your skills or capabilities compare to others?

What is your accomplished combination of skills and capabilities compared to others in your market?

Then, how do you anticipate applying those capabilities in the future?

It is often the second dot point or the combination of skills that drives your unique value. To dive deeper, please see our notes for why a combination of skills is so valuable. [xxix]

In the consulting firm example, I realized my ability to build and scale technology-enabled solutions would be more challenging in an accounting firm than in a technology company. As such, I better aligned my range of skills-based comparative advantages by leaving a firm with technology-based comparative disadvantages. [xxx]

Comparative advantage is only one kind of rock (or criterion). There are other criteria considerations, beyond comparative advantage. Developing and weighing the “what’s important to you” criteria is an outcome of your bucket analysis. A best practice for reducing belief inertia is developing and independently evaluating decision criteria (rocks). Once one decides to make a change, multiple alternatives for change may be considered. We discussed this earlier as a more complex, multi-criteria and multi-alternative, decision.

Side note: A decision-making smartphone app:

Definitive Choice is a smartphone app. It provides a straightforward user experience and is backed by time-tested decision science algorithms. It uses a proprietary "Decision 6(tm)" approach that organizes the criteria (what is important to you?) and alternatives (what are the choices?) in a series of bite-size ranking decisions. Since it is on your smartphone, you can use it while you are doing the research. It is like having a decision expert in your pocket. The results dashboard provides a rank-ordered list of "best choices," tailored to your preferences. Apps like this enable decision-makers to configure their own choice architecture.

Also, Definitive Choice comes pre-loaded with many templates. You will want to customize your own criteria, but the preloaded templates provide a nice starting point. In the context of this article, Definitive Choice will help you determine the size and color of your rocks (criteria) for your belief bucket. It will also help you apply those rocks to different alternatives. This will help you negotiate the best outcome.

3a. Change is not a failure

Annie Duke is a world-champion poker player, author, and cognitive sciences researcher. In an interview for her book "Quit," [xxxiii] she said:

“Richard Thaler is a Nobel laureate in economics and what he said to me is, ‘Generally we won’t quit until it’s no longer a decision.’ In other words, there’s no hope. You’ve butted against the certainty; your startup is out of money, and you can’t raise another round. You’re in a job with a boss that is so toxic that you have used up all your vacation and sick days and you’re still having trouble getting yourself into work.”

Thaler and Duke’s comments relate to a forced change. Sometimes change is the result of a forcing function. Running out of money is certainly an extreme forcing function example. Changing prior to the forcing function will often avoid a “crash landing.” A crash is often more negative than if you were more proactive and made a change before the crash. Thus, recognizing the need and preparing for change is important.

Job evaluation and potential job changes relate to the decision-making process. Successful gamblers like Annie Duke are incredibly good decision-makers. Their good decisions are outcomes of good decision processes characterized by:

Objectively evaluating the probability and risks of potential gambles, and

Understanding and integrating their and other players' emotions.

Good gamblers anticipate essential game success drivers and the nuances of the environment in which the game is played. Good gamblers embrace both objective and emotional information in their decision-making. Gambling and job evaluation share a common bond. They are both subject to uncertainty. They both require decision processes integrating factual information, forecasts, and emotion.

The next graphic provides the high-level job evaluation decision framework, including a "premortem."

Job evaluation decision framework

The proactive job evaluation approach includes a “premortem." [xxxiv] The premortem approach begins by recalling the criteria for why you accepted the job or other decisions in the first place. Go through an exercise of weighting the criteria and applying them to the current job. The smartphone app mentioned earlier is perfect for this exercise. Hopefully, at this point, the benefits are still above 50%. (Meaning - your belief bucket has more green rocks than red rocks.) Perform this exercise periodically. Be aware that your criteria categories (the name of the rocks) are reasonably stable. However, your criteria weights (size or color of the rocks) are likely not as stable. Most companies, jobs, or important life situations regularly evolve. Is the recent work evolution positive or negative to your criteria weight? Does that new boss have a positive or negative impact on your overall criteria weight? Often, the meaning of a work or other life change takes time to impact your overall criteria weighting. Sometimes, your weighting evolves so slowly as to be almost imperceptible. Like in the case of the apologue "Boiling the frog." That is why periodic premortem updating is critical!

Then, the next step is to write your premortem. A premortem is a credible story about the future where your goal benefits dropped below 50%. Focus your story on a criterion or several criteria (One or more rocks) that may cause a benefit-reducing change. What plausible set of events would cause the change? This is a future scenario that would compel you to seriously consider changing your job, including actively searching for alternatives. For extra credit, you could write multiple premortem stories! While the premortem story has not happened yet, you are preparing yourself in the event it does. If you are using the app, you may create a separate premortem alternative and score it using the results of your premortem narrative. In the notes section is a citation for potential job change criteria. [xxxv]

Use your premortem as a preparation tool for your next performance review. This is a great way to prepare for a productive conversation! I view performance reviews as a two-way street. Your boss or company deserves to know what you think of them as much as you deserve to know what they think of you. A two-way dialogue is a sign of a healthy relationship. You will ultimately earn the respect of your senior colleagues by providing a fair and honest appraisal. My experience is that most supervisors will return your thoughtful candor with a frank discussion about what the company may or may not be able to do to address the premortem concerns. This information will be very valuable to help you plan a potential change.

Think of a premortem as "Boiling your own frog." That is, taking ownership of your own honest and accurate evaluation, so you can make changes before the water gets too hot! This premortem scenario planning exercise will prepare you to 1) identify and evaluate an evolving environment and 2) emotionally prepare yourself to change when your job benefits drop below 50% - that is, when your belief bucket is more than half full of red rocks.

This may seem a little weird. Why would you plan for failure? It turns out, our cultural teaching about "failure" is a big problem. Our society teaches us change is somehow a failure. It is not! Change is a reasonable outcome when a situation changes and the benefit drops below the desired level. Change is healthy. Change is life! So a premortem is a way to prepare yourself for something normal and healthy. In my experience, people confuse "Grit" or "Never Give Up" determination as suggesting we should be less willing to change. This simply is not true. In the "Changing Our Mind" model, grit is needed for:

a) the active evaluation of the pursuit,

b) the dogged spirit and desire to pursue, and

c) making an appropriate change.

On the other hand, not making appropriate changes defy rationality. If you think of your grit as an investment, not making an appropriate change is like wasting the investment.

3b. Build your BATNA

In the case of changing jobs, it is good to consider multiple employer alternatives. To start, it is good not to limit your job alternative set, except to those that are clearly inferior. For example, if a job requires particular skills that you do not have and have no desire to acquire, then not including them in your alternative set is reasonable. Choosing and evaluating alternatives takes effort. Keep in mind that the effort is its own reward! Also, prematurely limiting your alternatives may create a biased choice set. This is known as “satisficing.” Using the tools suggested in this article reduces the likelihood and impact of satisficing. [xxxi] Being clear about your criteria, alternatives, and providing resources (mainly your time) is important. Think of the time and money spent evaluating your job criteria and alternatives as an investment. It is likely one of the most lucrative investments you will make in your life. The investment will almost certainly pay off.

Once alternatives are introduced to the decision-making framework, one may need to enter into negotiations with the various alternatives. Via negotiation, one may be able to negotiate a better alternative outcome. For example, if a big green rock from your belief bucket is “flexible work mobility,” then your negotiation may uncover your alternative is willing to provide work location flexibility. The key to good negotiation is 1) being clear about your criteria list and definition, and, 2) being clear about the weight of the different criteria. That is, clearly communicating the size and color of each rock in your evidence bucket. Please see the notes for a timeless parable and prioritization framework called "Big Rocks." Stephen Covey also references this parable in his book about time prioritization. [xxxii] This parable suggests your big rocks should be aligned with your personal mission. Naturally, your “Big Rocks” criteria will be unique to your preferences.

When negotiating, a best practice is to build your "BATNA" set. BATNA is an acronym that means "Best Alternative To a Negotiated Agreement." Building a robust BATNA set leads to better negotiation outcomes. To dig deeper, please see our notes describing the use of BATNA-enabled negotiation techniques. We provide a job change example.

Some people feel uncomfortable with negotiating. This is unfortunate. Good negotiation leads to better outcomes for all. Good negotiation is a form of good communication, where all parties express their key preferences. This creates a better platform for productive, long-term relationships. Also, it takes time and energy to build our BATNA set. Research shows that people will commonly make alternative-reducing "satisficing" shortcuts that may be counterproductive. Focusing on your BATNA is the foundation of good negotiation and a productive, long-term relationship. [xxxvi]

3c. Beware of sunk costs

Finally, do not consider sunk costs or sunk value. Indeed, there may be some credentialing benefits to past accomplishments. However, when it comes to evaluating your bucket’s pros and cons, only consider the value or costs anticipated in the future. For example, the three "pride points" mentioned in the previous consulting firm example are an example of past sunk value. As the KPMG change was considered, those points should be sunk and not considered as part of the belief bucket’s value comparison. While sunk in a decision-making context, I will always be incredibly proud of my teammates for the service we provided our clients and their customers. I call it “sunk with pride!”

What if you just can’t decide? What if, in the final analysis, the weighted evidence probability is about 50% and the decision could fall either way? The advice, when in doubt, is to make the MORE CHALLENGING decision. For example, if you are deciding between staying and leaving a job, and the weighted evidence is about a tie, I would leave the job. Why? While the pro and con evidence may seem evenly weighed at 50%, complete objectivity is almost impossible. That is, your natural familiarity bias and confirmation bias is an invisible “hand on the scale” weight inappropriately biasing toward the emotionally easier decision. In a related coin toss experiment, Steve Levitt came to a similar conclusion. Also, the neurological mechanisms of confirmation bias will naturally seem to decrease the weight of evidence contrary to past beliefs. [xxxvii]

Pulling it together

Please remember, not making a decision is a decision! Belief inertia is a powerful force. Being a good decision-maker is certainly easier said than done, especially since our own brain sometimes works against us. However, I did find this advice helpful when pulling the trigger and after the anticipatory bucket analysis showed belief updating made sense.

To summarize, “pulling the trigger” occurs at the end of a deliberate belief updating process. The characteristics of this deliberate "changing our mind" process are:

Each rock (criteria) is well-defined.

Each rock's size and color (weight) are updated with your latest perspective

Each rock's size and color (weight) are independently determined.

Pulling the trigger is easier with a premortem or related advance planning.

There are inexpensive and high-value smartphone apps available to help with this evaluation.

The following graphic shows how belief updating leads to better decisions. Belief updating is an ongoing probabilistic evidence evaluation leading to the best decisions. If you are new to this "changing our mind" model, it is often helpful to start by inspecting your childhood beliefs.

There is an old saying:

"Winners never quit and quitters never win."

I'm sure my children, like most children, heard this saying when they were young. My optimistic belief is that this saying was intended to help children. By providing simple rules, the desire was to help children develop grit and resilience. As adults, we need to update our initial beliefs. As adults, hopefully, we have developed the necessary grit and resilience. We provide the"Changing our mind" model as a means to take ownership of the beliefs initially formed in childhood. The"Changing our mind" approach helps update those initial beliefs. The"Changing our mind" model outcome is enabled by the observation:

"Quitters often do win!"

My wife and I have four children. When this article was published, they were all in their twenties. My wife and I did the best we could to raise our children in a loving and enriching environment. My hope is, their childhood takeaways include the permission and the expectation to inspect and update their childhood beliefs. Ultimately, ongoing belief updating and change is both a necessary and thrilling part of life.

4. Conclusion

Eliezer Yudkowski said [xxxviii]:

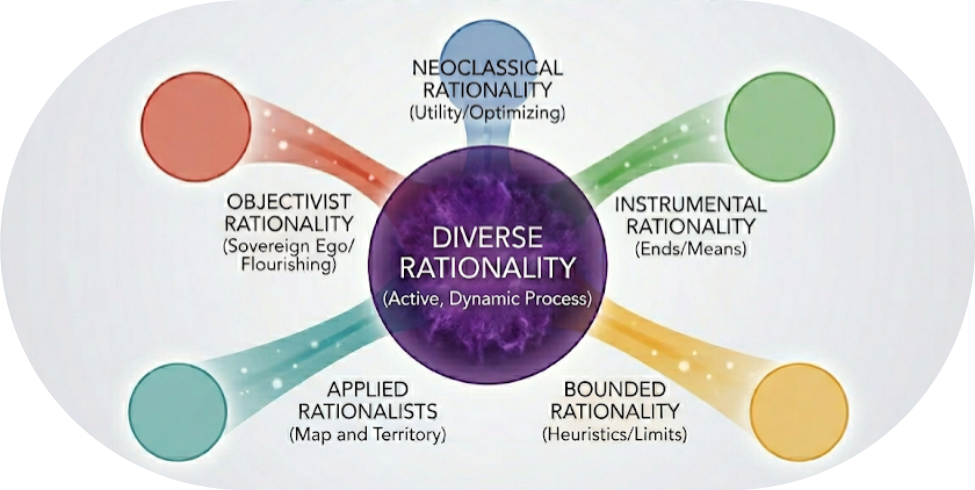

“Rationality is not for winning debates, it is for deciding what side to join.”

In this case, we have presented a rational approach to help evaluate our mind. This evaluation may lead to changing our mind about which side of a belief to join. We consider changing our mind as a deliberate and liberating experience.

Philip Tetlock, a University of Pennsylvania professor and forecasting researcher, said [xxxix]:

“…beliefs are hypotheses to be tested, not treasures to be guarded.”

We have suggested belief testing tools to help you:

Identify and overcome biases,

Manage data curation challenges,

Understand the impact of different mental processes, and

Provide structure to make great decisions.

Providing good decision tools is one thing, actively using them is another thing entirely. Recognizing and internally constraining cognitive biases, belief inertia, or emotional responses is not easy. In my experience, I’ve come to appreciate most people are good at making certain kinds of decisions. However, as decision complexity increases, especially in a world teeming with uncurated data, our challenges to constrain cognitive biases naturally decrease decision-making accuracy and precision. The best decision-makers recognize the internal constraint challenges and surround themselves with appropriate choice architecture. These are decision-making tools available to be configured and curated by the decision-maker. The best part about these tools is they are easy to use. After a brief familiarization process, you will become your own choice architect! A good decision process starts with good habits, routines, and understandings. This leads to utilizing cost-effective decision science-enabled technology when situation complexity warrants.

At this point, I am not ready to conclude this article. I’d like to live in it more, check other believable sources, and allow my left hemisphere-based language and right hemisphere-based gut to process. I am very interested to learn about others’ “changing our mind” lived experiences.

5. Notes and Musings

[i] Via our neurobiology, tribalism is a natural part of all of us and can be used for good or bad outcomes. Being aware of how our brains operate is helpful to identify and appropriately prevent being unknowingly influenced by tribalism.

Hulett, Origins of our tribal nature, The Curiosity Vine, 2022

[ii] For this article, we accept the existence of individual beliefs as a given starting point. The following are resources providing relevant context and additional research on how belief sources may be formed or impacted. Among other topics, these articles explore how culture and family upbringing impact our initial belief formation. We then show how our initial belief formation may impact how we grow and succeed in our careers.

The first, by Gneezy and List, provides the cultural impact of matriarchal v patriarchal society. Probably not surprisingly, cultural upbringing and cultural standards make a big difference in individual beliefs.

The second provides a risk management perspective and experience from Saujani. This provides reasoning for why appropriate play-based risk-taking as a child may improve life outcomes.

The third provides an explanation of how unseen neurodiversity, defined as the diversity of thinking types across all people, may impact our work environment as we emerge from the pandemic. Sources include Pew Research, Cain, and Kahneman. "How we think" is certainly a basis for our beliefs.

The fourth is psychology research from Carol Dweck. She suggests people are either in a growth mindset or a fixed mindset. This profoundly impacts their ability to change their mind. I believe our childhood experiences significantly contribute to the development of these mindsets.

Hulett, How braver people, especially our daughters, achieve more career success, The Curiosity Vine, 2022

Hulett, An investor’s view: Teaching our daughters to be brave, The Curiosity Vine, 2022

Hulett, Sustainable Diversity in the post-pandemic world, The Curiosity Vine, 2021

Dweck, Mindset: The New Psychology of Success, 2006

[iii] Yudkowsky, Map and Territory, 2018: See chapter 11.

[iv] Hulett, Origins of our tribal nature, The Curiosity Vine, 2022

[v] Simply defined, "evidence" is the link between cause and effect. Or, in more decision science-like language, evidence is the link between criteria and alternatives. Next are a couple of examples. The form of the evidence could be as simple as your judgmental answer to the cause-and-effect-linking evidentiary question. When possible, it is best practice to obtain independent support for the evidentiary question. For example: When considering the salary criterion for a good job, determine the comparable market salary evidence for your role from an independent source.

Changing a job example:

Beyond collecting the evidence, our evaluation includes weighing the criteria. That is, we need to assess the question: "How important is the causal linked evidence to determine the effect outcome?" This is akin to the size and color of the rock in our evidence bucket. For example, your location may be the most important criterion. So it is a big rock. If your current job wants to transfer you to Alaska, and you would prefer to live in New York, then the location's rock would be large and red.

We also include a “bankable loan” example. Banks generally have credit policies that define the criteria and evidentiary requirements to make loans. This example is simplistic, generally based on the lending "5 C's of Credit" standard. The loan decision is usually the responsibility of a loan underwriter. Much of the assessment has become automated via loan assessment algorithms like FICO scores.

Making a loan example:

Yudkowsky, Map and Territory, 2018: See Chapter 20.

Segal, 5 C's of Credit, Investopedia, 2021

[vi] A close cousin to the simpler “change my mind” decision is the more complex “make up my mind” decision. Here is an example of how I used objective and judgmental decision-making information to create a belief probability assignment program. This was to help my kids make up their minds about their college choice. The college decision is an incredibly complex decision. One may have 5-10 criteria and 5-10 college alternatives. This could create almost 100,000 decision combinations! Our brains are simply not wired to effectively handle such a complex decision without support.

When my kids were in the process of making their college decision, I created a decision program that ranks and weights key decision criteria and college alternatives. The outcome was a single weighted score to help them compare colleges. It includes both objective and emotion-based criteria. It includes costs. This ultimately informed their college decision. It provided confidence they were making the best college decision.

(Please see our article The College Decision for the decision model. Since this article was originally published, I initiated a non-profit organization with a mission to “Help people make a better life with better decisions.” Our non-profit recently stood up a college decision app.)

From a parenting standpoint, this was a great way to teach a little decision science and to help build my kids' decision-making confidence. By the way, 3 of my kids went to James Madison University, one went to Christopher Newport University. Both are excellent state schools and our kids have thrived. For more information on the college decision, please see our articles, The College Decision - Framework and tools for investing in your future, The Stoic’s Arbitrage, and How to make money in Student Lending

[vii] "The Affect Heuristic in Judgments of Risks and Benefits" studied whether subjects mixed up their judgments of the possible benefits of a technology (e.g., nuclear power), and the possible risks of that technology, into a single overall good or bad feeling about the technology.

Finucane et al., The Affect Heuristic in Judgments of Risks and Benefits, Journal of Behavioral Decision Making, 13, no. 1 (2000): 1-17.

Independently assessing criteria (or “rocks”) is critical to appropriately weighing the criteria. The Affect Heuristic is the naturally occurring mental mechanism that inappropriately jumbles criteria.

[viii] Dawes, Rational Choice in an Uncertain World: The Psychology of Judgement and Decision Making, 1988

[ix] Hulett, They kept asking about what I wanted to do with my life, but what if I don't know?, The Curiosity Vine, 2021

[x] Huffman, Raymond, Shvets, Persistent Overconfidence and Biased Memory: Evidence from Managers, American Economic Review (conditionally accepted), 2021

This framework is based on research from Tversky and Griffin. It shows how the strength and weight of evidence typically interact with individual belief inertia and confidence bias. As in the job performance self-assessment study by Shvets, et al, people will tend to be overconfident in their self-assessment. Likely not because they did not consider previous negative assessments, but rather, likely because they underweight them as evidence. In Adam Smith's The Theory of Moral Sentiments, Smith suggests we naturally delude ourselves. Smith says,

It is so disagreeable to think ill of ourselves, that we often purposely turn away our view from those circumstances which might render that judgment unfavorable.

The physicist Richard Feynman said,

"The first principle is that you must not fool yourself - and you are the easiest person to fool."

In the "Belief inertia and fear" case, fear caused me to overweight the risks associated with a job change. In the "Belief inertia and expertise" case, it was the "experts" that were overconfident as in the lower right part of the framework. However, it was Fukuyama's apparent lack of expertise that cause his superiors to underweight his assessment that the wall was likely to fall. It is interesting how belief inertia may have opposite bias impacts (over or under) in the same situation. It would seem that listening to smart but situationally independent people is a good practice. Such people do not have an organizational investment regarding their expertise. Such is the role of consultants.

Tversky, Griffin, The weighing of evidence and the determinants of confidence, Cognitive Psychology, Volume 24, Issue 3, 1992

[xi] An interview, Freakonomics Radio, Stephen Dubner with Francis Fukuyama, "How to Change Your Mind", episode 379, 2022

Steve Levitt interviewed Dr. Jennifer Doudna. Doudna is a Nobel Laureate chemist who invented the revolutionary gene-editing capability CRISPR. At one point, Levitt asked her about what makes her successful. Doudna’s response is aligned with overcoming expertise-based belief inertia:

“…people in general who are coming into a field where there’s not really a pre-existing expectation of them. And so, it does give them freedom to do things that are outside of the norm.”

An interview, People I Mostly Admire, Steve Levitt with Jennifer Doudna, “We Can Play God Now", episode 67, 2022

[xii] Tetlock, Gardner, Superforecasting: The Art and Science of Prediction, 2015

Also, in the introduction the phrase "Perpetual Beta" is noted. This is from Tetlock's book.

[xiii] The definitions of “logical fallacies” and “cognitive biases” are related but distinct. One may think of reasoning as a systemic process, using a standard Inputs -> Process -> Outputs (IPO) systems model.

In this model, think of cognitive biases as the degree to which our mental reasoning INPUTS are impacted (or "biased") in terms of how we perceive information. We cite familiarity bias and confirmation bias for this article, though there are many other cognitive biases. On the other side of the reasoning system, think of logical fallacies as the degree to which the reasoning OUTPUTs are fallaciously impacted. The false dichotomy logical fallacy may be the result of cognitive biases. Following is a very good book on systems thinking:

Meadows, Thinking in Systems: A Primer, 2008

A potential cause of the false dichotomy logical fallacy is familiarity bias. If you have a choice between two options in your life or work — a safe one and a risky one — which one will you take? In a series of experiments, psychologists Chip Heath and Amos Tversky showed that when people are faced with a choice between two gambles, they will pick the one that is more familiar to them even when the odds of winning are lower. Our brains are designed to be wary of the unfamiliar. Familiarity bias is our tendency to overvalue things we already know. When making choices, we often revert to previous behaviors, knowledge, or mindsets. By the way, the literature on cognitive bias has grown substantially in recent decades. One of my favorite sources is Daniel Kahneman’s Behavioral Economics classic:

Kahneman, Thinking, Fast and Slow, 2011

An excellent discussion of heuristics and cognitive biases may be found in:

Kahneman, Slovic, Tversky (editors), Judgment Under Uncertainty: Heuristics and Biases, 1982

Below, we discuss confirmation bias in note [xv].

[xiv] Understanding how our personality interacts with our thinking process is generally helpful when addressing held beliefs. Time prioritization and personality preferences may interact. That is, we are generally more likely to perform certain thinking tasks when those tasks are a net energy provider v. a net energy drag. See the following article for more information on introversion and extroversion thinking processes.

Hulett, Creativity - For both introverts and extroverts, The Curiosity Vine, 2022

[xv] "Tuck it away" runs some risk. What if you do not remember to write it down? What if you think, "That is so far away from my understanding, I'm not going to bother with it...I'm too busy!" The risk here is known as "Confirmation Bias." Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms or supports one's prior beliefs or values. It is challenging to be disciplined, especially when busy. I manage this by keeping ongoing notes and periodically referring to them. I use an informal but relatively structured method that is described in our article Curiosity Exploration - An evolutionary approach to lifelong learning.

For more information on the scarcity-based impacts of being time-pressed, please see:

Mullainathan, Shafir, Scarcity: Why Having Too Little Means So Much, 2013

[xvi] We discuss the social challenges related to data curation and good decision-making in the following article. We recognize that inherent social challenges may make good decision-making and related outcomes more challenging for individuals in some social groups. We also provide tool suggestions to help counteract social challenges.

Hulett, The Great Social Equalizers: Data and Decision-Making, The Curiosity Vine, 2021

[xvii] Please see our article for an effective approach to successfully curating your information. Below is our concluding paragraph, speaking to our civic duty to be a good data curator.

Hulett, Information curation in a world drowning in data noise, The Curiosity Vine, 2021

In 1903, former U.S. President Theodore Roosevelt (1858-1919) gave a speech at the Sorbonne in Paris, France, titled Citizen In A Republic. This is the same speech that gave us the timeless “Man In The Arena” passage. Roosevelt discusses the importance of the “average person” in our democracy.

“But with you and us the case is different. With you here, and with us in my own home, in the long run, success or failure will be conditioned upon the way in which the average man, the average woman, does his or her duty, first in the ordinary, every-day affairs of life, and next in those great occasional cries which call for heroic virtues.”

- Theodore Roosevelt, bolding added

Along the lines of Roosevelt, I consider information curation of the “average person” to be one of the most important individual duties of our democracy. Unfortunately, the current trend is less toward information-curation-based reason and more toward non-curated data-based emotional reaction. Those that sow non-curated data, do so for a reason. That is, they wish to provoke a loosely considered emotional response. Those emotions often come from an internal place contrary to information curation…. that is, from an internal place of information insecurity. The recent storming of the U.S. Capitol certainly appears to have elements motivated by information insecurity. The personal and societal cost of information insecurity is high. Information curation takes effort and has a cost, but is most important to the health of our democracy. Fortunately, the effort of information curation is its own reward. Information curation provides the calming confidence of curiosity enablement. Plus, a security blanket to repel the demons of information insecurity.

[xviii] An interview, Freakonomics Radio, Stephen Dubner with Matthew Jackson, "How to Change Your Mind", episode 379, 2022

[xix] My mentor and CEO of Rockland Trust Company, Chris Oddleifson, was famous for saying “Live in it.” He would say it in the context of getting a working team unstuck and on to the business of creating solutions.

[xx] The term “believability” is used in this context by Ray Dalio.

Dalio, Principles, 2017

[xxi] An interview, People I Mostly Admire, Steven Levitt with Moncef Slaoui, “It’s Unfortunate That It Takes a Crisis for This to Happen”, episode 9, 2020.

[xxii] In the following skills, tasks, and missions reference, please see the first section of our article called "Background - our attitudes, behaviors, and career segments."

Hulett, They kept asking about what I wanted to do with my life, but what if I don't know?, The Curiosity Vine, 2020

[xxiii] Lindsay, Models of the Mind: How Physics, Engineering and Mathematics Have Shaped Our Understanding of the Brain, 2021

[xxiv] Sloman, Fernbach, The Knowledge Illusion, Why We Never Think Alone, 2017

Robin Dawes provides an aligned suggestion:

"Do not propose solutions until the problem has been discussed as thoroughly as possible without suggesting any.”

Dawes, Rational Choice in an Uncertain World, 1988

[xxv] This relates to the Dunning–Kruger effect. People may overestimate their ability until they increase their bucket to a certain size AND with a higher proportion of language-informed evidence. This also reminds me of Bertrand Russell’s quote mentioned earlier.

[xxvi] Hulett, Curiosity Exploration - An evolutionary approach to lifelong learning, The Curiosity Vine, 2021

[xxvii] NN Taleb, as described in his book, considers long-term religious principles as part of tail risk management. They exist because they work and have stood the test of time.…. like the Golden Rule.

“....religion exists to enforce tail risk management across generations, as its binary and unconditional rules are easy to teach and enforce. We have survived in spite of tail risks; our survival cannot be that random.”

NN Taleb is an amazing thinker. However, I still endeavor to challenge my beliefs and the supporting evidence. Even if that evidence may include religious doctrine. While I may not ultimately change a religion-grounded belief, it is a best practice to challenge.

Taleb, Skin In The Game, 2018

[xxviii] In the following article, we present the physics-based argument for why the goal of life is to "Fight Entropy." The article also connects the dots between the perception of time and entropy. The article presents research from Neuroscientists and Physicists such as Genova, Taylor, Price, Buonomano, and Dyson.

Hulett, Fight Entropy: The practical physics of time, The Curiosity Vine, 2021

[xxix] We discuss the power of developing and leveraging a combination or range of unique skills and capabilities. See the following article:

Hulett, The case for range: Why “polymathic” people are so valuable, The Curiosity Vine, 2021