Redefining Rationality: The Act of Calibrating Internal Maps to the Evolving World

- Jeff Hulett

- 60 minutes ago

- 12 min read

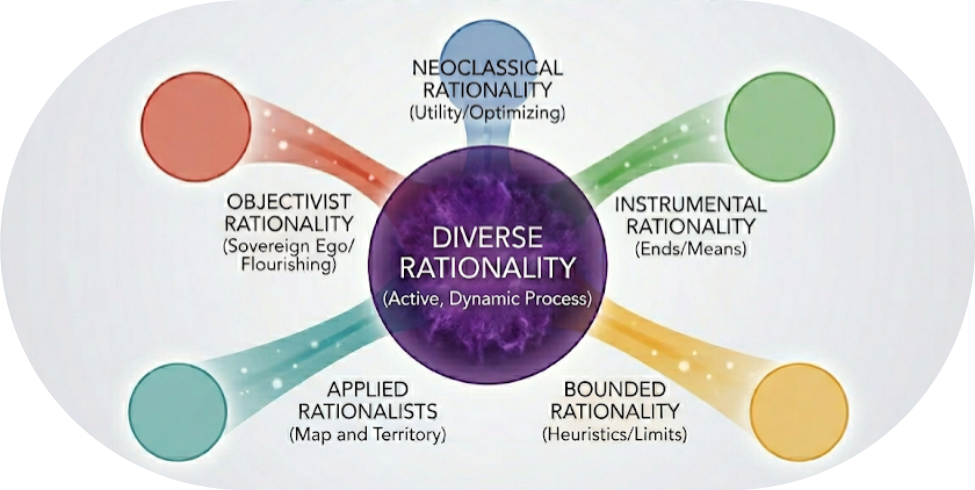

Many people view rationality as a cold, rigid set of rules. This narrow definition often creates resistance because it suggests a singular, correct way to live. However, this article introduces and defines Diverse Rationality to better align with how people actually experience decision-making in their lives. We move rationality from a static destination to an active process of internal calibration. To implement this diverse perspective, we utilize Bayesian Inference as the practical approach for updating our understanding of the world in real-time.

A medical emergency quickly reveals the flaw in traditional, rigid thinking. Imagine driving a spouse to the hospital while they suffer from severe bleeding. A red traffic light appears. The law requires a full stop.

An observer on the sidewalk sees only a car and a red light. To that observer, the rational act remains stopping because they lack your internal data. Inside the car, you possess New Evidence the observer cannot see. Your Existing Belief in traffic safety is suddenly under pressure from a more urgent internal standard: the survival of your family.

You are not merely breaking a rule. You are performing an active, internal update. You weigh the low probability of a collision against the near-certainty of your spouse losing consciousness. In this high-stakes moment, rationality is not a fixed social behavior. It is the dynamic process of shifting your internal weights in real-time. Running the light functions as a rational act because it maintains integrity with your most vital internal standard, even as the external world demands compliance to a different one.

About the author: Jeff Hulett leads Personal Finance Reimagined, a decision-making and financial education organization. He teaches personal finance at James Madison University and provides entrepreneurial services. Check out his book -- Making Choices, Making Money: Your Guide to Making Confident Financial Decisions.

Jeff is a career banker, data scientist, behavioral economist, and choice architect. Jeff has held banking and consulting leadership roles at Wells Fargo, Citibank, KPMG, and IBM.

The Generalization: Rationality as a Vector

Every human decision functions as a vector possessing both direction and magnitude. Unlike static models judging a decision by its proximity to a universal "correct" point, Diverse Rationality views the decision as an active movement. Three invisible, time and situation-dependent variables drive this vector, distinguishing it from static or "bounded" definitions.

First, The Sovereignty of Ends. | Individuals operate within a subjective hierarchy of priorities an observer cannot see. In the bleeding spouse scenario, the "end" shifts from law-abiding citizenship to life-saving intervention. While other models might call this a "bias" or a "deviation," Diverse Rationality recognizes it as a logical re-prioritization. An observer using an outdated priority list will falsely diagnose irrationality because they measure against the wrong destination. |

Second, Strategic Resource Allocation. | Standard "bounded" models often view limited cognitive bandwidth as a failure of human hardware. Diverse Rationality treats this as a deliberate, logical adaptation. In high-stress moments, the brain sheds non-essential data to protect the most vital goal. This is not a "bound" on performance; it represents the active optimization of energy toward the highest internal standard available at that micro-second.

|

Third, The Impermanence of Information. | Knowledge is not a static library; it is a flow subject to temporal decay. A decision appearing logical at one point becomes obsolete as new data arrives. Unlike definitions demanding consistency over time, Diverse Rationality views stagnant consistency as a potential trap. Integrity exists in the speed of the update, ensuring the internal map remains synchronized with an ever-changing environment. |

The Shoulders of Giants: A Helpful but Incomplete History

This new framework stands upon the contributions of past thinkers. Each provided a necessary building block for a model of human choice.

Neoclassical economists, such as Paul Samuelson and Milton Friedman, established the logic of optimization. They demonstrated how human behavior follows a pattern of maximizing utility. However, their models assumed universal goals. They created a "Robo-Rationalist" which ignored the hidden diversity of personal values.

Philosophers like David Hume identified the technical architecture of choice. They distinguished between instrumental rationality, the efficiency of means, and epistemic rationality, the pursuit of truth. These thinkers explained the mechanics of the engine and the map, yet they ignored the subjective motivations of the driver.

Herbert Simon and Gerd Gigerenzer introduced the reality of limits. Simon identified bounded rationality, noting that human hardware possesses finite memory and energy. Gigerenzer showed how fast and frugal heuristics adapt to messy environments. While helpful, these views often frame human choice as a shortfall relative to computers rather than a sovereign adaptation.

Applied rationalists, including Steven Pinker and Eliezer Yudkowsky, emphasized the logic of the map. They popularized the idea that internal beliefs must accurately predict the territory of reality. While their tools offer immense value, they often imply a universalist bias: the existence of a “correct” map.

Ayn Rand contributed the moral dimension. She defined rationality as the choice to think and the recognition of reality as a requirement for individual flourishing. Her work established the sanctity of the driver. Still, her absolutism suggested a single heroic path, whereas diverse rationality recognizes that the heroic choice remains personal and dynamic.

Diverse Rationality: The Unseeable Process

Rationality does not represent a destination. It represents a continuous act of calibration. Because an individual’s internal budget, life stage, and preferences remain private, their rational standard stays invisible to outside observers. External judgment often fails because the observer cannot see the internal map the individual uses to navigate.

However, the challenge of visibility extends inward. We often struggle to see our own maps clearly. Internal biases, ego, and the desire for social acceptance can obscure our true priorities.

Consequently, we must redefine irrationality. It does not consist of a deviation from social norms or mathematical averages. Instead, irrationality represents a breakdown in the internal loop. It occurs when an individual fails to identify their own unique standards honestly or makes decisions in direct conflict with those identified standards due to inertia or fear. One acts irrationally only when a gap exists between what the internal judge knows and what the external actions produce. True rationality requires the courage to look at the internal map without flinching.

The Four Nevers: Why Static Maps Melt

The need for a process-focused rationality arises from the inherent limits of human knowledge. In a stable world, a fixed map might suffice. However, real-world systems are governed by the Four Nevers, which render any static understanding of reality obsolete over time or across situation. These four principles create a state of constant flux, requiring the individual to adopt a mindset of continuous updating.

1. Knowledge is Never Complete (Rumsfeld’s Never) This principle represents a temporal deficit. Every individual faces "unknown unknowns" because the future remains unmanifested. No matter how much data one collects in the present, the map remains a surface-level representation. It fails to capture the hidden depth of reality or the shifting events of the timeline. Because the present serves as an imperfect predictor of the future, our starting point must be one of humility.

2. Knowledge is Never Static (Goodhart’s Never) The world is not a passive laboratory. Human agents observe, interpret, and adapt to their environment. When we measure a system or set a goal, people change their behavior to meet that target. This dynamism means that what worked yesterday may fail today. Knowledge is ephemeral because human agents are always "gaming" the map.

3. Knowledge is Never Centralized (Hayek’s Never) This principle represents a cross-sectional deficit. Vital information exists in dispersed, localized forms across the population. The specific insights needed for a decision often live at the "edges" of a network rather than in a central database. Because no two people stand in the same spot in the present, no two people share the same priorities or data sets.

4. Knowledge is Never Invariant (Kahneman’s Never) Humans are not consistent calculators. Judgment varies based on psychological context, emotional states, and how information is framed. Even with the same facts, an internal map shifts depending on the environment. We are subject to internal "noise," making past choices unreliable predictors of future ones.

These Four Nevers serve as the source of dynamism in human affairs. They show how the "Territory" is always shifting. If the world is never complete, static, centralized, or invariant, then rationality cannot be a fixed destination. It must be a dynamic. This reality necessitates a formal method for updating our internal maps: the Bayesian process.

Bayesian Inference: Implementing the Process

Diverse rationality requires a functional operating system to manage a world of melting maps. Bayesian inference provides this mechanism. The process follows a systematic, four-step movement to bridge the gap between current knowledge and future possibilities.

The logic is expressed through this simple relationship. Think of this math as an intuitive transformer. It transforms your Existing Belief (EB) by applying New Evidence (NE). The outcome of the transformation is called the Posterior. Since rationality is a process, the Posterior will become the Prior for the next iteration of your diversely rational process.

This equation works on the two underlying inputs mentioned earlier:

New Evidence (NE): This is is incremental evidence adding to your existing knowledge. Such as, “My spouse is bleeding” is new evidence.

Existing Belief (EB): This is your “going in” belief or assumption BEFORE the new evidence is presented. “Stopping at a stop light and obeying the law” is the existing belief.

To manage uncertainty effectively, the process treats beliefs as probabilities:

The Prior (Existing Belief): Your starting probability. It represents the strength of your current belief based on past experience.

The Likelihood: If your Existing Belief were true, how likely is it that this New Evidence would appear?

The Baseline Evidence: How likely is this evidence to occur across the whole world, including situations where your belief is wrong? A high baseline "dilutes" the evidence. Common evidence is weak evidence.

The Posterior (Updated Map): Your revised probability after weighing the prior, likelihood, and baseline. This becomes your new prior for the next update.

The bleeding spouse scenario serves as a real-time application of the Bayesian framework in a high-stakes environment. While the driver begins with a strong commitment to the law, the sudden arrival of sensory data forces an immediate re-evaluation of what is most "rational" in that specific moment. This shift is not a abandonment of logic, but rather a precise mathematical update where new, urgent information overrides a previous baseline assumption.

The Bayesian Case Study: Revisiting the Bleeding Spouse

You sit at a red light with a bleeding spouse. Your Existing Belief (EB) is that traffic laws ensure safety and must be followed. Suddenly, New Evidence (NE) arrives. You must use the Bayesian transformer to see if the probability of a true emergency outweighs your commitment to the signal.

Imagine your total world of possibilities as a House.

The Prior — Building the Rooms: Before looking at the blood, you know life-threatening emergencies are rare. You build a tiny 1% closet for "Emergency" and a massive 99% living room for "Normal Trip."

The Likelihood — Finding the Signal: You see the blood. If a life-threatening emergency is real, the probability of finding blood in that tiny closet is 90%.

The Baseline — Measuring the Noise: You must also account for "noise." In the massive 99% Normal Room, there is a tiny 1% chance you would see blood for a trivial reason (like a minor cut).

The Solution: The Internal Update

To find your new perspective, you compare the "Emergency Blood" (the signal) against the "Total Blood" found in the entire house (the signal plus the noise).

Emergency Signal: 1% (Prior) X 90% (Likelihood) = .9

Total Baseline Evidence: .9 (Signal) + .99 (Noise) = 1.89

The Posterior: Divide the signal by the total evidence (0.9 / 1.89). Your probability of a true emergency is now 48%.

Diverse Rationality and Agency

In mere split seconds, your map has undergone a tremendous change. You moved from a 1% starting assumption to nearly 50%. While a 48% probability means there is still roughly a 1-to-1 chance this is a false alarm, the asymmetry of risk gives you your agency.

An observer on the sidewalk remains anchored to the 1% map because they cannot see your spouse. But your Posterior—your updated reality—indicates that the cost of waiting now dwarfs the cost of proceeding. You carefully run the red light, not as a lawbreaker, but as an individual maintaining integrity with the highest value: the preservation of life.

Practice as Preparation

The sight of a bleeding spouse is unsettling. In moments of panic, the human brain rarely defaults to a Bayesian approach; instead, it reaches for the "easy button" of a static rule or a fear-based heuristic. This is where most observers fail in their judgment. Because Diverse Rationality is anchored to an individual’s invisible map, an external observer has no way of knowing if your action is a rational update or a panicked mistake. They cannot see your internal "weights."

However, the more you practice this Bayesian movement during calm moments, the more it becomes an "on-board" intuition rather than a labored calculation. By training your Internal Judge to weigh the signal against the noise, you bridge the gap between instinct and agency. You ensure that when a crisis hits, you possess the clarity to act with your diverse rationality—not because it is visible to the world, but because it is true to the territory you alone are navigating.

Respecting the 4 Nevers: Managing Information and Incentives

The Bayesian approach requires an understanding of the sources providing your data. Every piece of evidence carries an origin point, and those sources often operate under specific incentives. Once you understand data providers follow their training and "Standard Operating Procedures" (SOPs), you can become your own best advocate.

In the American medical "data provider" context, SOPs maintain business discipline but reduce individual judgment of the attending physician. An SOP often dictates: if a test result is "X," then the billable procedure is "Y." In the business SOP context, choosing "Y" over "Z" (a non-surgical alternative) constitutes an Error of Omission. Without asking the right questions, a patient may never realize "Z" represents a worthy decision path.

A Bayesian Case Study: The Ruptured Disc Dilemma

In my 30s, I faced this dilemma. An MRI confirmed a disc rupture. My attending physician, who was also a back surgeon, recommended immediate surgery (Procedure Y). However, my Bayesian process signaled a warning. My starting EB (Prior) favored a conservative, non-surgical default (Z).

I recognized the surgeon's recommendation as a product of the medical business’ SOP. To refine the Baseline Evidence, I asked: "How often do MRIs show bulges in people with no pain?" Research shows this baseline is high. Many healthy people have bulging discs. This "noise" diluted the surgeon's evidence.

I sought a "clean" diagnosis from a retired neurosurgeon. He confirmed the physiological risks of waiting remained low. He confirmed conservative care (Z) addressed the root cause. Because the baseline of MRI findings was so high, the specific evidence for immediate surgery was too weak to override my prior. I chose physical therapy, and the issue resolved. This conservative route preserved my options. One can always choose surgery later, but one can never undo a surgery already performed.

Today’s medical system is abundant with misaligned incentives. While most medical practitioners intend to do good, the system itself provides those same medical practitioners with incentives to drive profitable medical system outcomes. This places the patient in a difficult spot, to navigate hidden incentives potentially creating a disconnect between intentions and outcomes. This reality makes Bayesian reasoning more important than ever.

The Internal Judge: The Impartial Spectator

Adam Smith introduced the concept of the Impartial Spectator. This internal supervisor acts as the advisor for the Bayesian update. The spectator remains the only observer capable of seeing the standard. Rationality represents a state of internal consistency where the individual has nothing to hide from their own judge. Success involves integrity between actions, values, and the updated model of the world. A Bayesian decision system enables accuracy and resolved inevitable bias leaking into the process.

The Decision System Enables Integrity

Shifting the rationality focus from a fixed point to a dynamic process empowers the individual. We move from judging people against a static social norm toward encouraging the refinement of their own decision process. Rationality belongs to the individual. By restoring its definition to an action, we restore dignity to the driver in the red light scenario and the patient facing surgery.

The wise individual remains full of doubts because they respect the 4 Nevers. Knowledge remains incomplete and the environment remains dynamic. We should stop striving to "be" rational as a final state. Instead, we must practice the process of acting rationally. This requires keeping the inner world and the real world in a state of honest, updated, and diverse conversation.

Resources for the Curious

Bayes, Thomas, and Richard Price. "An Essay towards Solving a Problem in the Doctrine of Chances. By the Late Rev. Mr. Bayes, F. R. S. Communicated by Mr. Price, in a Letter to John Canton, A. M. F. R. S." Philosophical Transactions of the Royal Society of London 53 (1763): 370–418.

De Finetti, Bruno. Theory of Probability. 2 vols. Translated by Antonio Machí and Adrian Smith. New York: John Wiley & Sons, 1974–1975. (Original work published 1970).

Friedman, Milton. Essays in Positive Economics. Chicago: University of Chicago Press, 1953.

Gigerenzer, Gerd. Gut Feelings: The Intelligence of the Unconscious. New York: Viking, 2007.

Hayek, Friedrich A. "The Use of Knowledge in Society." The American Economic Review 35, no. 4 (1945): 519–30.

Hume, David. A Treatise of Human Nature. Edited by L. A. Selby-Bigge. 2nd ed. Oxford: Clarendon Press, 1978. (Original work published 1739–1740).

Jaynes, E. T. Probability Theory: The Logic of Science. Edited by G. Larry Bretthorst. Cambridge: Cambridge University Press, 2003.

Kahneman, Daniel. Thinking, Fast and Slow. New York: Farrar, Straus and Giroux, 2011.

Laplace, Pierre-Simon. Philosophical Essay on Probabilities. Translated by Andrew I. Dale. New York: Springer-Verlag, 1995. (Original work published 1814).

McGrayne, Sharon Bertsch. The Theory That Would Not Die: How Bayes' Rule Cracked the Enigma Code, Hunted Down Russian Submarines, and Emerged Triumphant from Two Centuries of Controversy. New Haven: Yale University Press, 2011.

Pinker, Steven. Rationality: What It Is, Why It Seems Scarce, Why It Matters. New York: Viking, 2021.

Ramsey, Frank Plumpton. "Truth and Probability." In The Foundations of Mathematics and Other Logical Essays, edited by R. B. Braithwaite, 156–98. London: Kegan Paul, Trench, Trubner & Co., 1931.

Rand, Ayn. The Virtue of Selfishness: A New Concept of Egoism. New York: New American Library, 1964.

Silver, Nate. The Signal and the Noise: Why So Many Predictions Fail—but Some Don't. New York: Penguin Press, 2012.

Simon, Herbert A. Models of Bounded Rationality. Cambridge, MA: MIT Press, 1982.

Smith, Adam. The Theory of Moral Sentiments. Edited by D. D. Raphael and A. L. Macfie. Oxford: Oxford University Press, 1976. (Original work published 1759).

Thaler, Richard H. Misbehaving: The Making of Behavioral Economics. New York: W. W. Norton & Company, 2015.

Yudkowsky, Eliezer. Rationality: From AI to Zombies. Berkeley, CA: Machine Intelligence Research Institute, 2015.

Thanks - this is great. Appreciate the minimally mathy, intuitive Bayes.